Your Agent Can Now

Phone a Friend

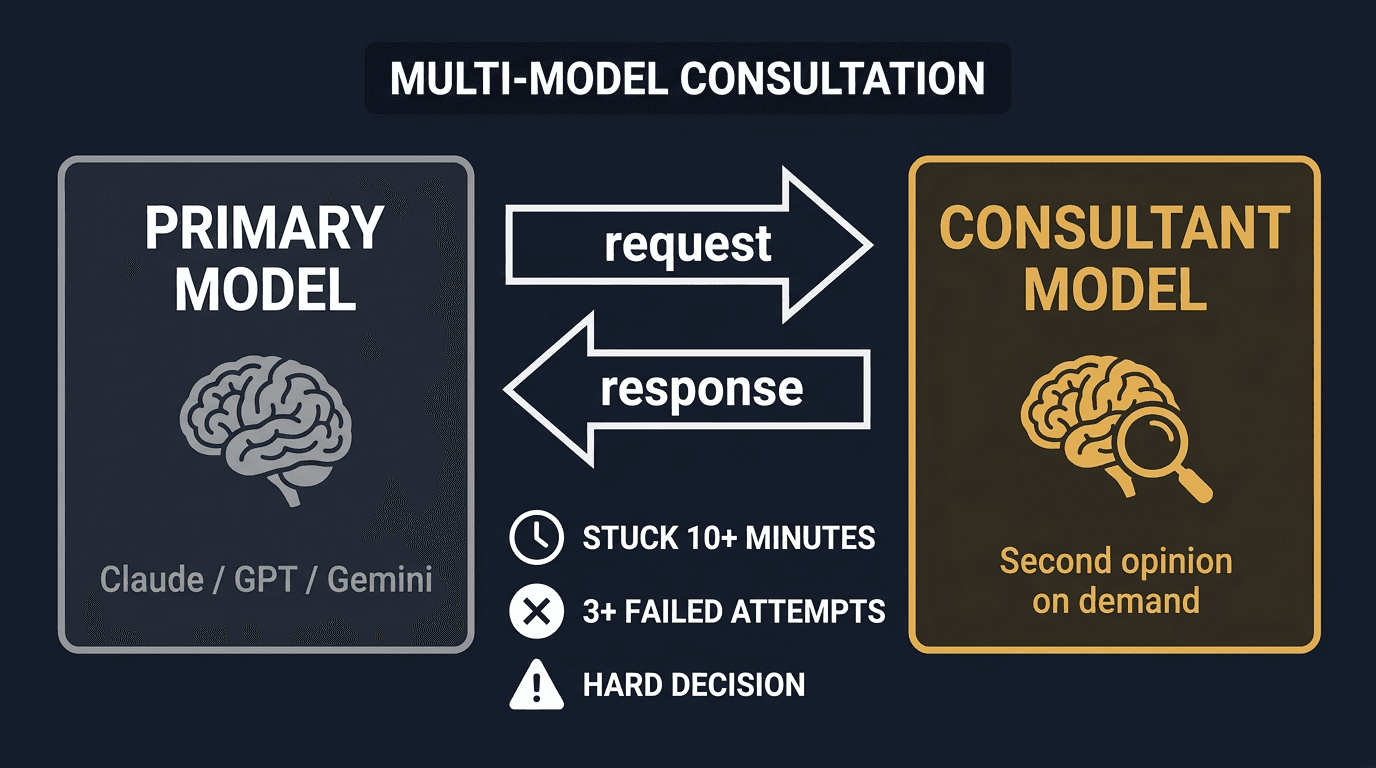

Introducing omega_consult_gpt: a new MCP tool that lets Claude agents consult GPT-5.3 mid-session when they want a second opinion.

Every agent hits walls. A gnarly bug that resists three debugging attempts. An architecture decision with no clear winner. A domain where another model has stronger priors. Until now, you'd restart in a different context and lose everything. Today, OMEGA agents can stay in their session and bring another intelligence in.

The Multi-Model Problem

AI agents are getting good at most tasks. But no single model is best at everything. Claude excels at code generation and long-context reasoning. GPT has different training signal strengths. Sometimes it catches what Claude pattern-matches past. The question has always been: how do you get both without breaking your workflow?

The naive answer is "switch providers." But that throws away everything: session context, memory state, coordination with other agents. You don't want to replace your primary intelligence. You want to consult a second one.

Consultation, Not Replacement

omega_consult_gpt is OMEGA's 14th MCP tool. It lets Claude call GPT mid-session, get a structured response, and decide what to do with it. Claude stays primary. GPT provides a second opinion on demand.

The response comes back with a header showing which model answered (## GPT Consultation (gpt-5.3)), so Claude (and you) know exactly where the advice came from. It's a consultation, not a handoff.

When to Reach for It

The OMEGA protocol includes built-in guidance on when consultation makes sense. The rule of thumb: if you've been stuck for 10+ minutes or tried 3+ approaches, it's time to phone a friend.

Debugging dead ends

You have the stack trace, the context, and three failed hypotheses. Fresh eyes from a different model can spot what you're pattern-matching past.

Irreversible architecture decisions

DB schema, API contracts, auth flows. Before you commit to something hard to undo, get a second opinion on the tradeoffs.

Domain expertise gaps

Crypto, ML pipelines, networking edge cases. Different models have different training signal strengths. Ask the one that knows more.

Fragile solution cross-validation

It works, but it feels wrong. Ask GPT to poke holes before you ship it.

When Not to Use It

Second opinions are cheap. But they're not free: each consultation adds 10-60 seconds of latency. The protocol explicitly tells Claude to skip it when:

- ✗Simple tasks: formatting, renaming, CRUD. The latency isn't worth it.

- ✗Speed-sensitive work: each consultation adds 10-60 seconds.

- ✗Tests already pass: don't second-guess success.

- ✗Routine OMEGA operations: store, query, checkpoint.

Under the Hood

omega_consult_gpt is built on OMEGA's provider abstraction layer. The function always calls OpenAI directly, regardless of what OMEGA_LLM_PROVIDER is set to. This means your primary agent keeps running on Claude while the consultation tool reaches out to GPT specifically.

The tool exposes five parameters to the agent:

The model itself is not exposed to the agent. That's an operator decision. Set OMEGA_GPT_MODEL=gpt-5.3 in your environment and every consultation routes there. Default is gpt-4o.

Setup

Configuration

One env var. Zero config files.

The tool appears in your MCP tool list automatically. No server restart required if using the daemon.

omega_consult_gpt is a Pro feature. It's part of OMEGA's multi-model architecture, the same provider abstraction that lets you swap the primary LLM via OMEGA_LLM_PROVIDER.

What This Enables Next

This is the first step toward model-aware workflows in OMEGA. The consultation tool proves the pattern: keep one model primary, bring others in selectively. Future directions include:

- •Model-aware protocol: omega_protocol() output that adapts its guidance based on which LLM is running

- •Automatic consultation triggers: the protocol already tells Claude when to consult; future versions could trigger it automatically on repeated failures

- •Multi-model memory: storing which model produced which insight, so future queries can weight responses by source

Second opinions are cheap. Postmortems are not. Give your agent a phone-a-friend lifeline and let it use the best tool for the job.

Get started

Two commands. Zero cloud. Full memory.

Then add your OpenAI key and omega_consult_gpt is ready. Full quickstart guide