OMEGA on a Phone: Local AI Memory on Samsung S25 Ultra

We ran a feasibility study: sub-5ms semantic search on the NPU, 120 MB total, fully offline. The phone is overpowered for the job.

Engineering decisions, benchmark deep-dives, and lessons from building an AI memory system in the open.

We ran a feasibility study: sub-5ms semantic search on the NPU, 120 MB total, fully offline. The phone is overpowered for the job.

Obsidian's built-in search is keyword-only. The OMEGA plugin adds semantic search, resurfacing, and agent memory to your vault, all running locally.

Not all memories are equal. OMEGA uses importance-weighted exponential decay inspired by FadeMem and cognitive science to keep signal high.

Cursor removed its Memories feature in 3.0. OMEGA replaces it with persistent, editor-agnostic memory via MCP. Setup takes two minutes.

Cisco found that malicious npm packages can poison Claude Code's MEMORY.md. OMEGA's encrypted SQLite storage eliminates this attack surface.

The Claude Code plugin ecosystem is massive. But every plugin starts from zero each session. OMEGA adds the memory layer.

Karpathy proposed an LLM-maintained knowledge base with Obsidian as the frontend. OMEGA fills the scaling gap with semantic search and persistent memory.

Every fund has GPT-5 and Claude. The edge is compounded institutional knowledge. Family offices: keep it on your machine.

Turing Post published seven emerging memory architectures for AI agents. Five of them describe things OMEGA already ships. Here's the mapping.

Businesses spend millions on prompt engineering and model selection. The real intellectual property is institutional memory: the accumulated decisions, lessons, and context that compound over time.

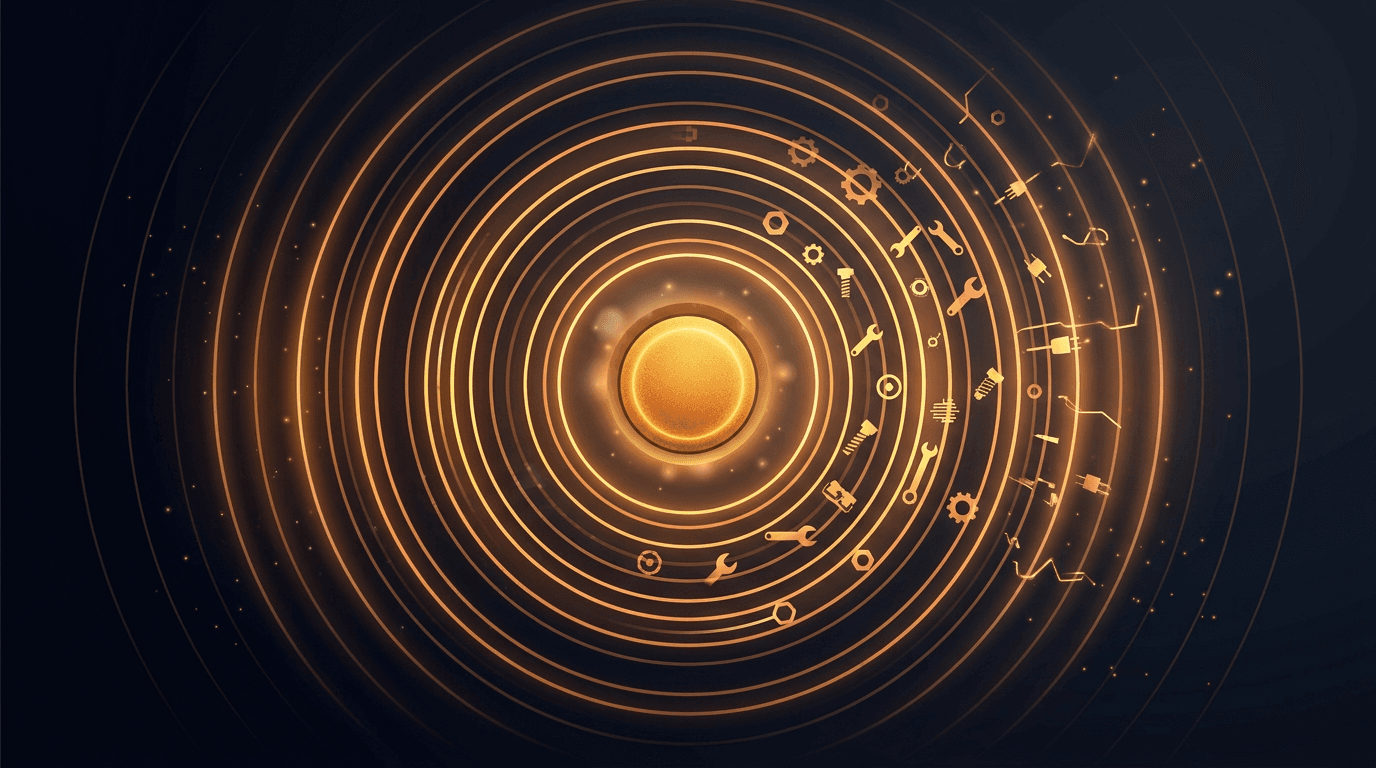

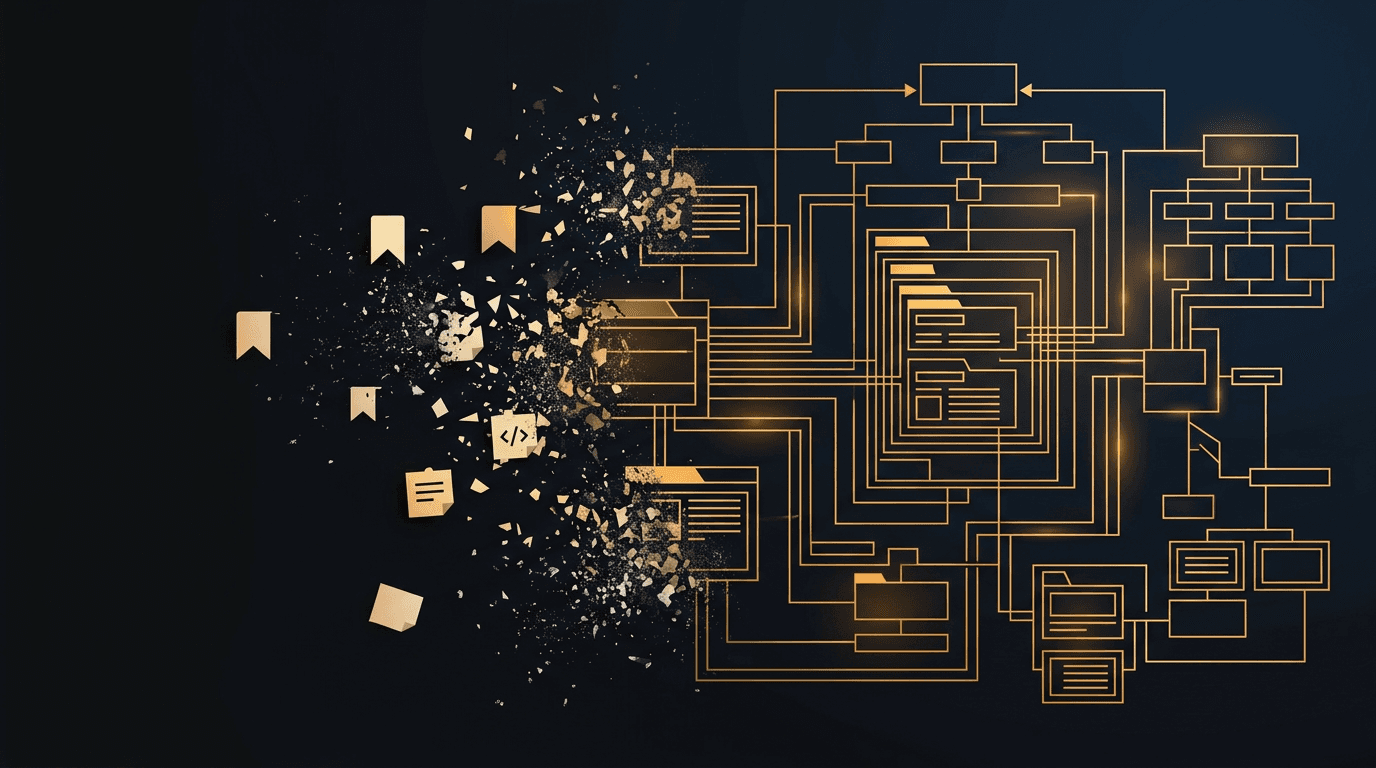

There are 283 MCP servers in the memory category. Most are markdown files with a search bar. Here's why we stopped calling OMEGA a memory layer.

AI agents write 41% of code but produce 1.7x more bugs. 80% of AI review comments are noise. omega_review combines persistent memory, multi-agent specialist panels, and hybrid static+LLM analysis to fix code review.

omega-obsidian indexes your Obsidian vault with semantic embeddings, knowledge graph traversal, and write-back. Six MCP tools, persistent index, one pip install.

CrewAI agents forget everything between runs. OMEGA is the first persistent memory integration for CrewAI, adding semantic dedup, contradiction detection, and cross-session persistence. Three lines to set up.

MEMORY.md is capped at 200 lines with no semantic search, no dedup, and no cross-project recall. OMEGA is the Claude Code MCP memory alternative that fixes all six limitations.

QMD is an excellent local search engine for markdown files. But retrieval is not memory. The Memory Ladder framework explains the difference.

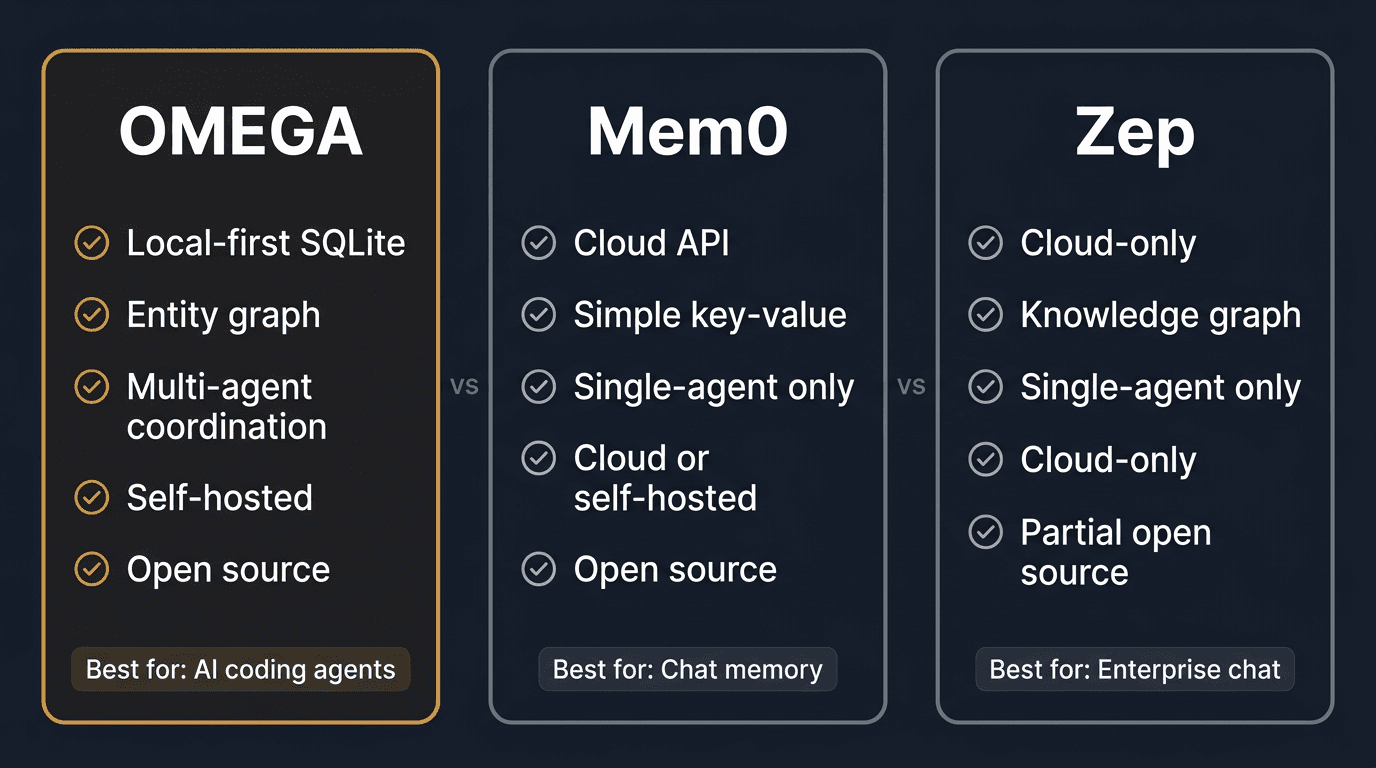

AWS just named Mem0 its "exclusive memory provider" for 14M+ developers. What that actually means, and why defaults are more dangerous than lock-in.

We are committing the greatest intellectual property theft in history -- against ourselves.

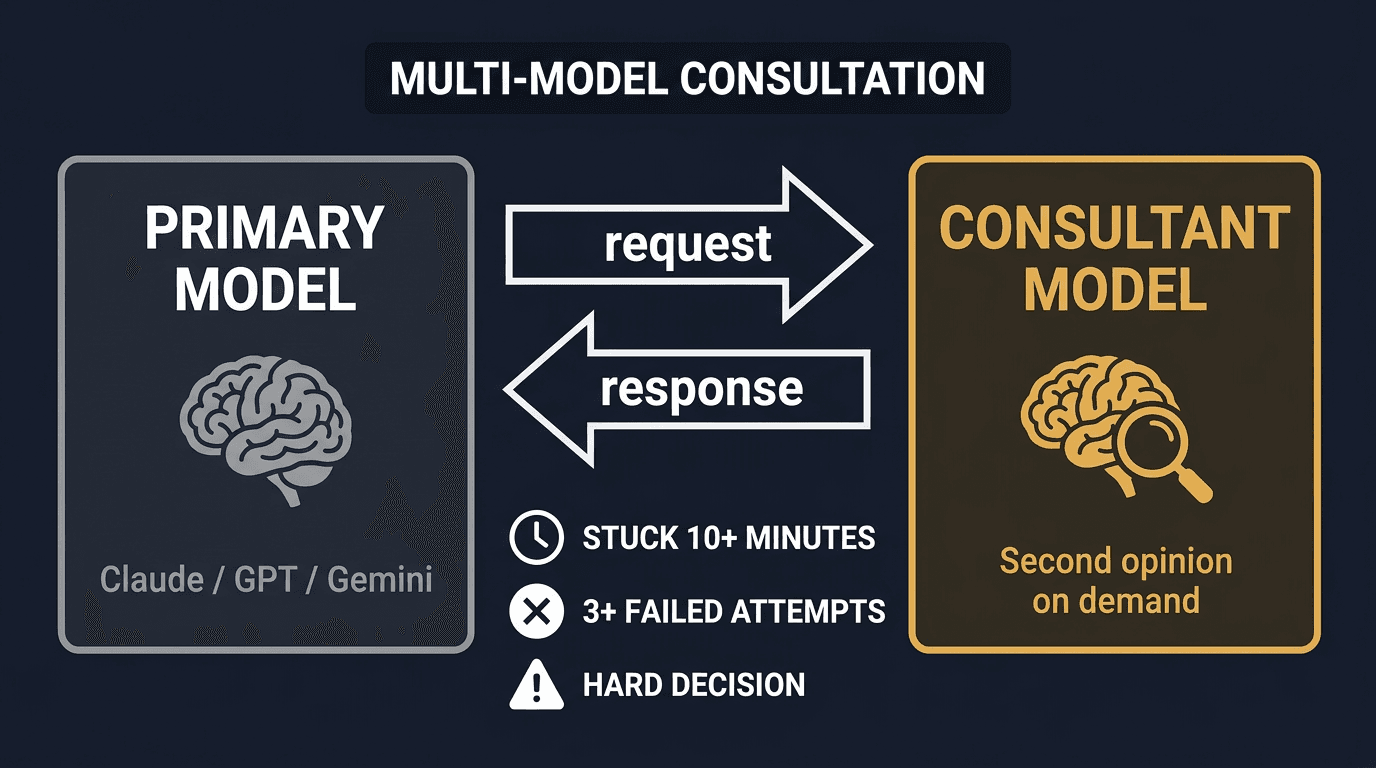

Introducing omega_consult_gpt: a new MCP tool that lets Claude agents consult GPT-5.3 mid-session for a second opinion on hard problems. Pro feature, one env var to configure.

LangChain added persistent memory to Agent Builder on Feb 19. Virtual filesystem in Postgres vs semantic store in SQLite. Honest comparison on 8 dimensions.

Claude Code Remote Control ships full MCP server access. That means your entire OMEGA memory is now reachable from any device with a browser.

The AGI bottleneck is not intelligence. It is verification. Using Catalini's framework from MIT to explain why memory is the missing verification infrastructure for AI agents.

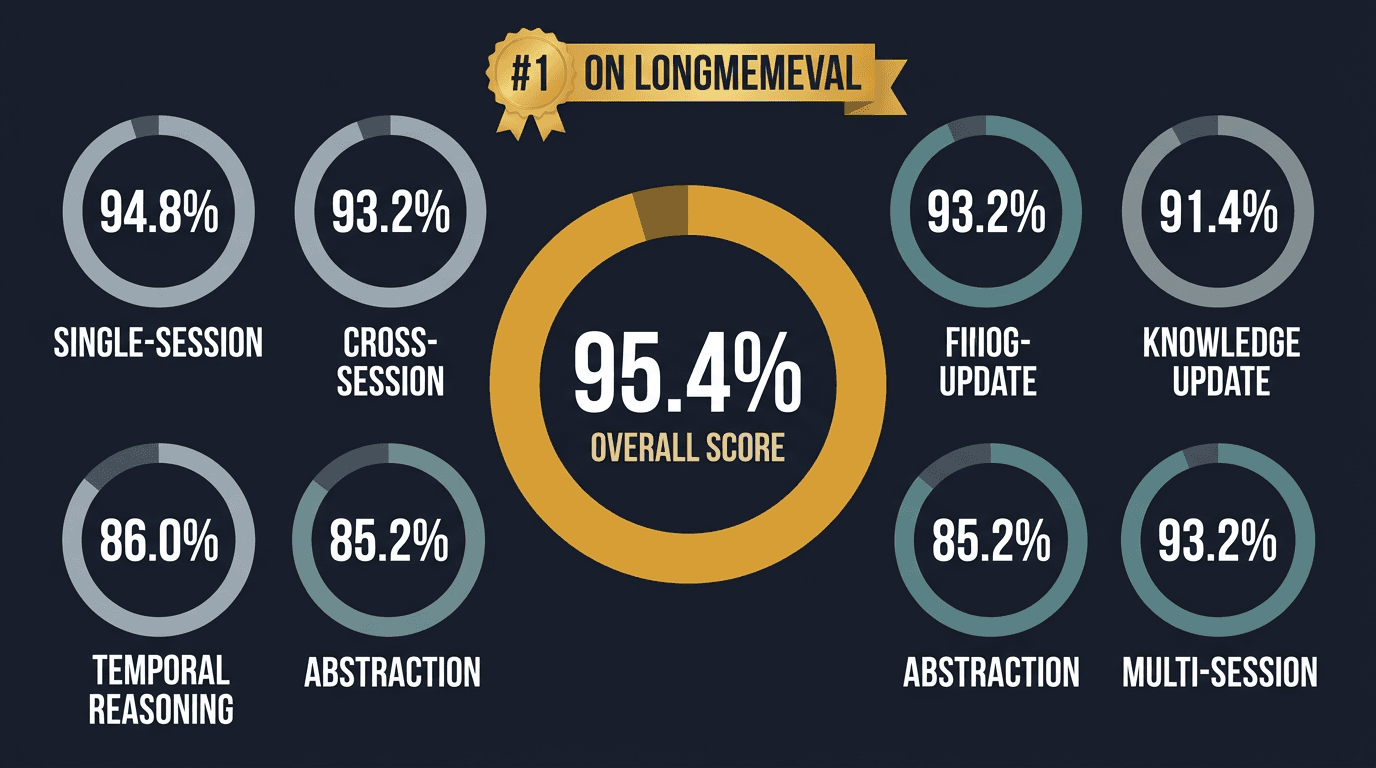

Mastra's observational memory scores 94.87% on LongMemEval. But the benchmark hides a critical limitation: when the session ends, the memories are gone. External vs in-context memory, explained.

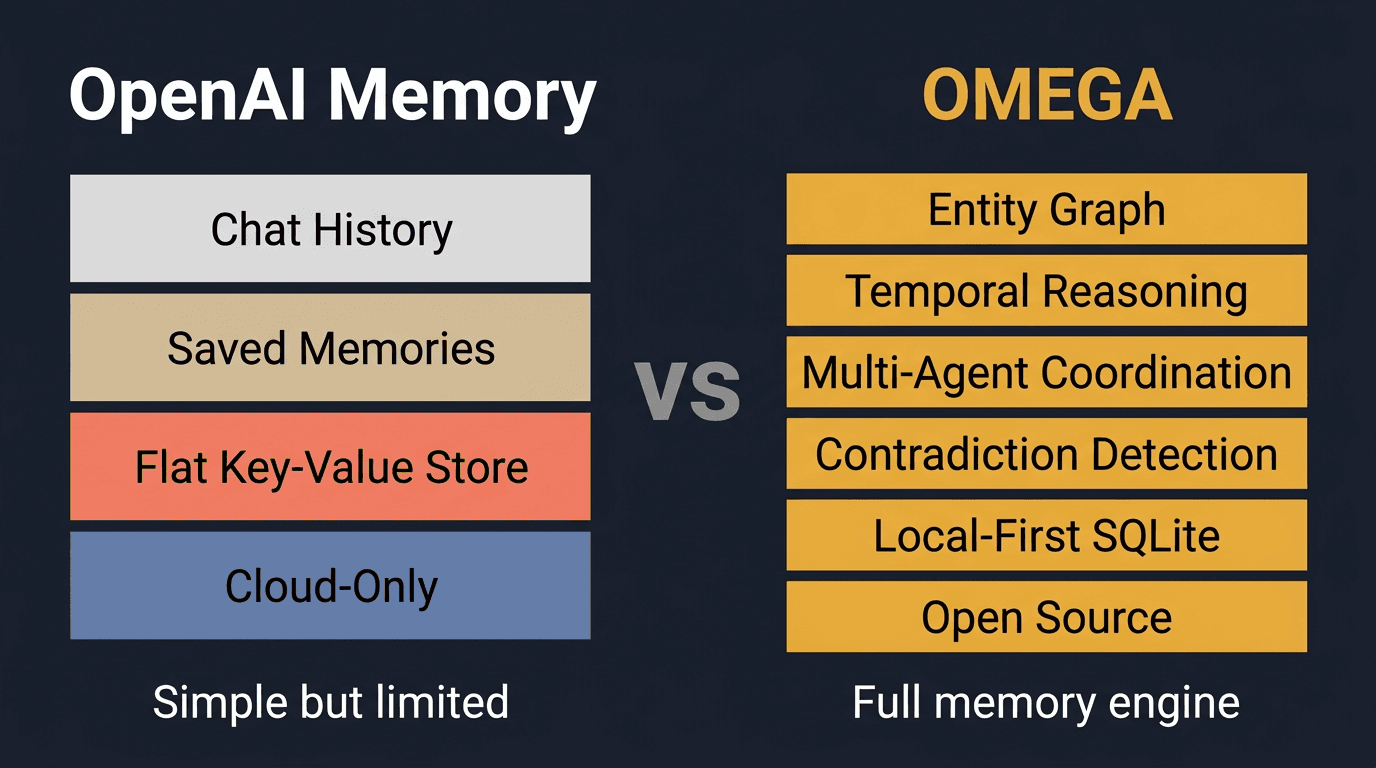

Reverse-engineered ChatGPT memory architecture, the Conversations API gap, and a data-backed comparison with OMEGA. No RAG, no vector DB, just context injection.

Claude Desktop users aren't developers. They can't pip install. So we built one-click installers that bundle Python, install OMEGA, and auto-configure Claude Desktop. Zero terminal commands.

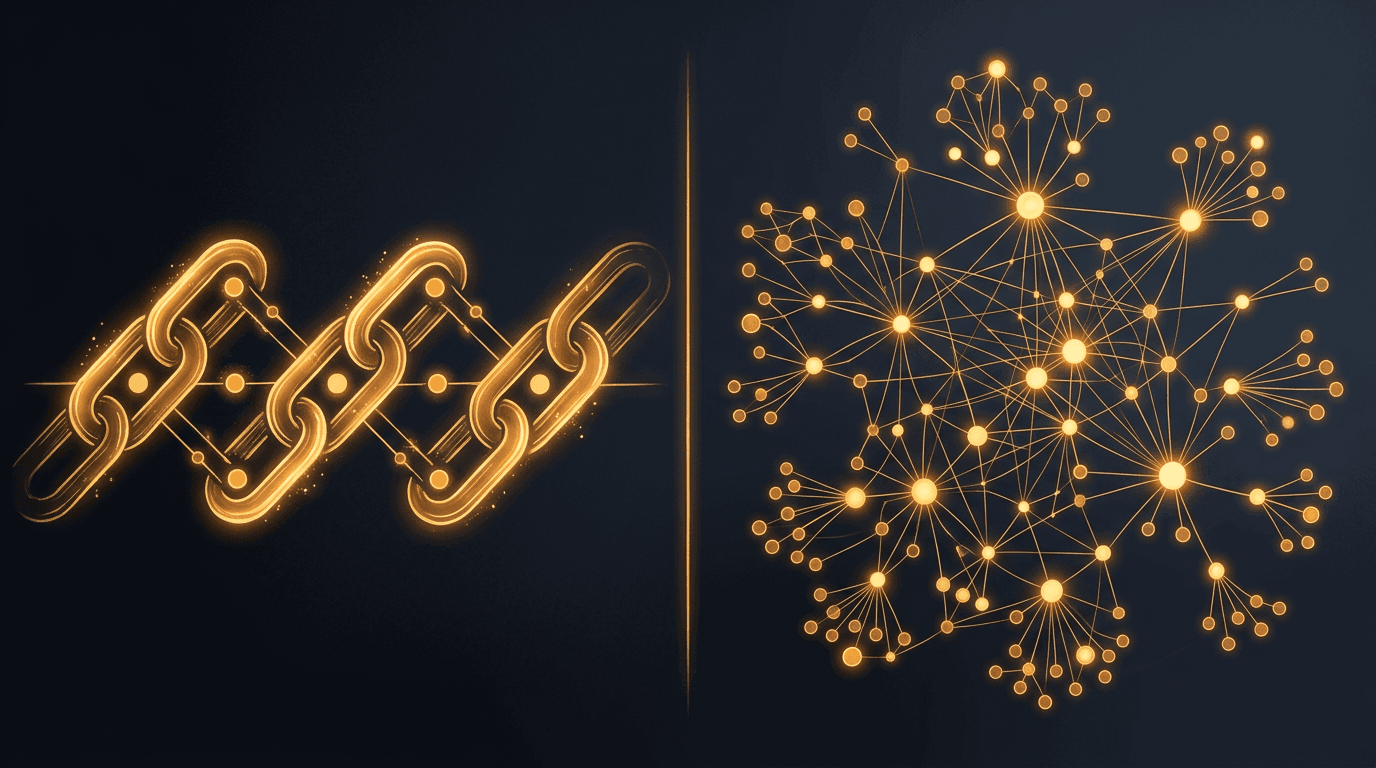

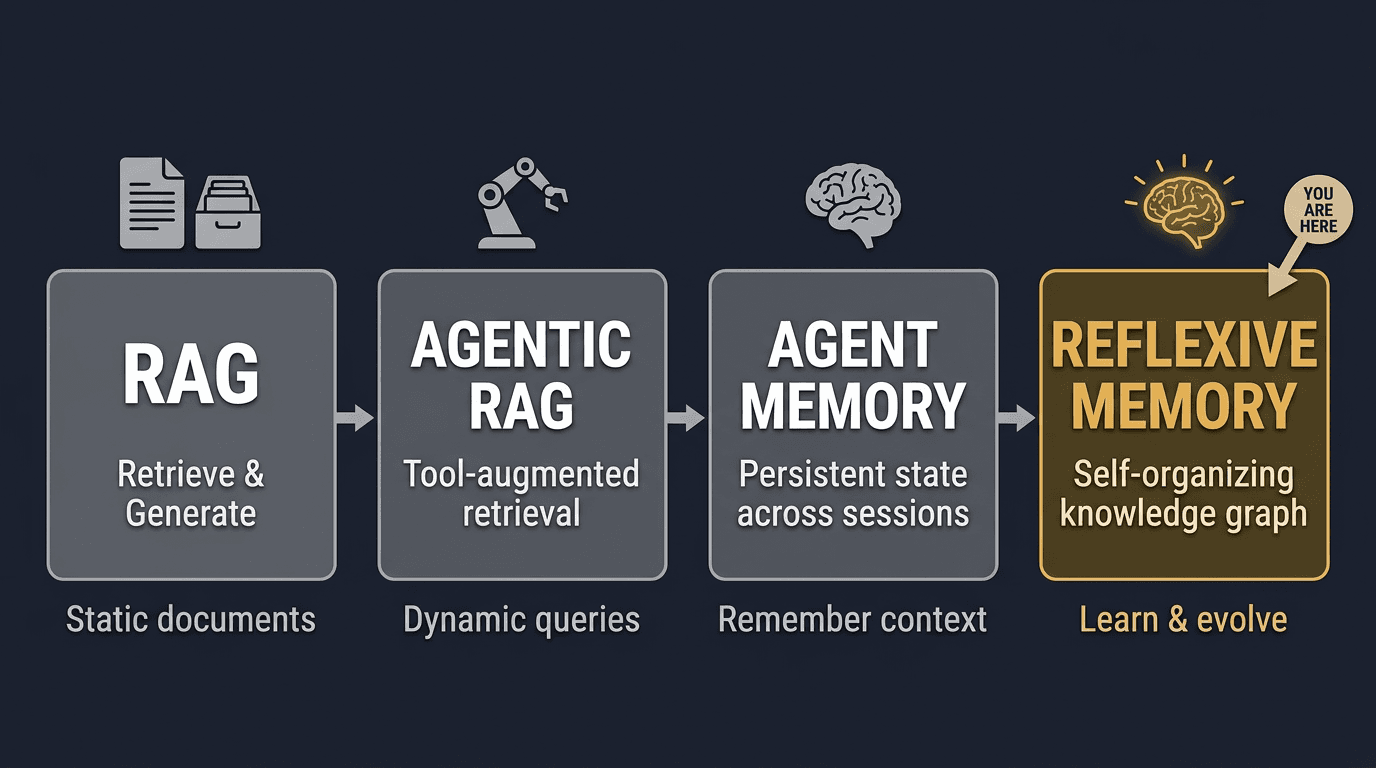

The evolution from RAG to agentic RAG to agent memory, and why the next stage is reflexive memory that can audit, evolve, and forget its own knowledge.

LEARNING.md works until it doesn't. Five failure modes of flat-file memory, and what a real store-and-query pipeline looks like under the hood.

OMEGA observes your tool usage, git habits, session timing, and file relationships to build a behavioral profile. Eight SQL-only extractors, zero LLM calls, full temporal decay.

Your AI agent forgets everything between sessions. Two commands fix that. Works with Claude Code, Cursor, Windsurf, and any MCP client.

OMEGA now re-scores search results with a neural cross-encoder and automatically detects when new memories contradict existing ones. Zero LLM calls, fully local.

OpenClaw is the most popular AI agent (194K+ stars), but it forgets everything between sessions. Step-by-step guide to adding persistent, semantic memory via MCP.

Supermemory, Mem0, and bookmark-sync tools are built for consumer knowledge. Here's why coding agents need decision trails, lesson learning, and intelligent forgetting.

Three architectures. Two benchmarks. Real numbers. Which AI memory system should you actually use in 2026?

Every AI memory system claims high recall. None have been tested at 1,000 sessions. So I built the benchmark that does.

How a solo developer with zero funding built the top-ranked AI memory system - 95.4% task-averaged accuracy, beating $13M-funded teams with a local-first architecture.