How OpenAI Memory

Actually Works.

Reverse-engineered architecture. The developer gap. And why agents need something purpose-built.

OpenAI built the most widely-used AI memory system. Hundreds of millions of people use it every day in ChatGPT. But what's actually happening under the hood? And more importantly: can developers use any of it for their own agents?

I reverse-engineered ChatGPT's memory architecture, dug through the Conversations API and Agents SDK, and compared everything against OMEGA, the memory system I built. I'm biased, and I'll tell you where OpenAI is genuinely better. But the architectural differences are real, and they matter.

How ChatGPT Memory Actually Works

Here's the part most people get wrong: ChatGPT's memory does not use RAG. It does not use a vector database. It does not use a knowledge graph. The entire system is pre-computed summaries injected into the system prompt.

Reverse-engineering analysis reveals a six-layer context injection architecture. Every time you start a conversation, ChatGPT receives all of these layers before your first message:

Saved Memories

PermanentExplicit facts the user asked ChatGPT to remember. Numbered entries with timestamps. Durable until deleted.

Response Preferences

Inferred, evolvingInferred behavioral patterns with confidence scores. ~15 entries tracking format and style preferences.

Past Conversation Topics

SummarizedHistorical summaries from earlier conversations. ~8 high-level topic abstractions per mature account.

User Insights

DerivedDerived personal information: name, location, expertise, interests. Generated from conversation analysis.

Recent Conversations

Rolling window~40 chat summaries with timestamps. User messages only (no assistant responses). Separated by delimiters.

Interaction Metadata

AutomaticDevice info, usage statistics, account age, location data, behavioral patterns. 17-19 data points.

The critical design decision: when context space runs low, current session messages are trimmed first, while permanent saved memories and summaries are prioritized. Long-term personalization wins over short-term context. This is a reasonable tradeoff for a consumer chatbot, but a dealbreaker for a coding agent that needs to remember what happened 10 minutes ago in the current task.

There is no search step. No query at retrieval time. The model simply sees whatever was pre-computed and injected. If a fact wasn't selected for injection, it doesn't exist for that conversation.

What OpenAI Gets Right

Credit where it's due. OpenAI nailed several things:

The April 2025 update was significant. Before it, ChatGPT only remembered things you explicitly asked it to save. After it, ChatGPT references your entire conversation history, building a richer profile over time. For a consumer product, this is exactly right.

The Developer Gap

Here is where the story changes. OpenAI built a great memory system for ChatGPT. Then they did not give any of it to developers.

No API access to ChatGPT memory

The memory system that powers ChatGPT is a product feature, not a platform capability. Developers building their own agents cannot use it. There is no endpoint to store, query, or manage memories programmatically.

Conversations API is not memory

The Conversations API (2025) persists messages and tool calls within a single conversation. It is conversation-level state, not cross-conversation semantic memory. You cannot search across conversations, detect contradictions, or retrieve facts from months ago.

Agents SDK requires DIY everything

The OpenAI Agents SDK provides RunContextWrapper for structured state and supports storage backends (SQLite, Redis, Dapr). But you build the entire memory layer yourself: storage schemas, retrieval logic, deduplication, forgetting, contradiction handling. It is a framework, not a memory system.

To be clear: OpenAI offers excellent building blocks. The Responses API is well-designed. The Agents SDK is capable. The /responses/compact endpoint for context compression is clever engineering. But none of these are a memory system. They are primitives that you assemble into one yourself.

If you want cross-session semantic memory, contradiction detection, intelligent forgetting, and multi-agent coordination, you build all of it from scratch. Or you use a purpose-built memory system.

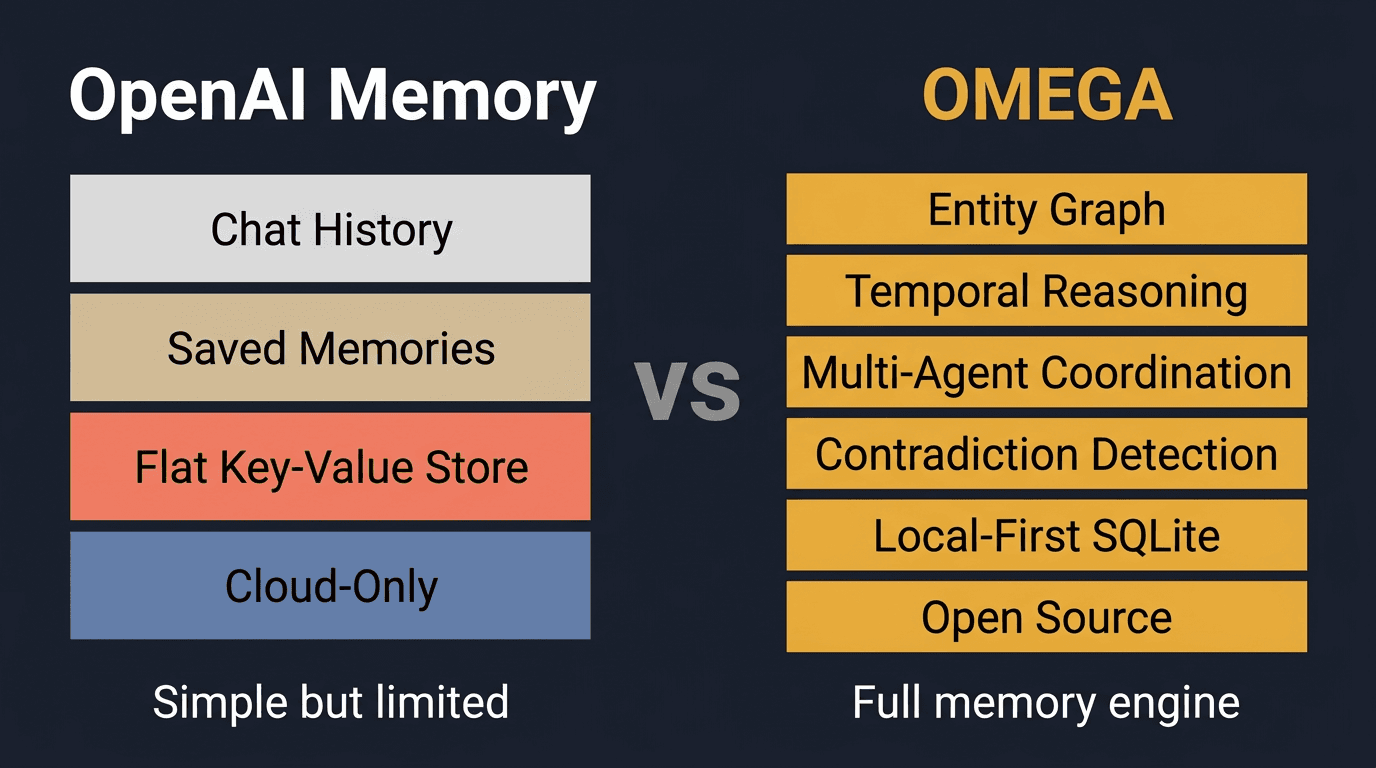

Architecture: Side by Side

Three systems, three architectures. ChatGPT's consumer memory, OpenAI's developer tools, and OMEGA:

| Dimension | ChatGPT Memory | Conversations API | OMEGA |

|---|---|---|---|

| Memory model | Context injection (pre-computed summaries in system prompt) | Conversation-level state persistence (messages + tool calls) | Semantic store with hybrid BM25 + vector retrieval |

| Cross-session memory | Yes (summaries survive across all chats) | No (scoped to one conversation object) | Yes (full semantic search across all stored memories) |

| Data location | OpenAI servers | OpenAI servers | Single SQLite file on your machine |

| Search capability | None (model sees pre-selected context) | None (linear message history) | Hybrid BM25 + vector search, semantic reranking |

| Memory capacity | Limited (memory full errors, mitigated Oct 2025) | Unlimited messages per conversation | Unlimited (SQLite scales to millions of entries) |

| Forgetting | Automatic, opaque (less relevant memories fade to background) | Manual deletion or /responses/compact endpoint | Intelligent forgetting with audit trail and confidence decay |

| Privacy | Data on OpenAI servers, may be used for training | Data on OpenAI servers, API data policy applies | Never leaves your machine, zero network calls |

| Developer access | None (product feature only) | REST API (Responses API) | MCP protocol with 12 tools |

| Cost | $20-200/mo (ChatGPT subscription) | Per-token API pricing | $0 (fully local, no API calls) |

| LLM lock-in | OpenAI only | OpenAI only | Works with any LLM (Claude, GPT, Gemini, local models) |

The Fundamental Difference

The architectural split comes down to one decision: when do you decide what's relevant?

Pre-compute and inject

Store and retrieve

OpenAI optimizes for speed at the cost of precision. When context fills up, the current session gets trimmed to preserve long-term memories. For a chatbot that needs to feel personalized, this is fine.

For a coding agent that stored a critical architectural decision 200 sessions ago and needs to find it now, you need search. You need a system that can answer “what did I decide about the database schema for the auth module?” by actually searching, not by hoping the right summary was pre-injected.

OpenAI has not published any LongMemEval or comparable benchmark results for ChatGPT memory. Without published numbers, we cannot make a direct accuracy comparison. What we can say: context injection is fundamentally limited by context window size, while a dedicated retrieval system scales to millions of entries.

What Developers Actually Need

When you're building AI agents, you need capabilities that neither ChatGPT's memory nor the Conversations API provide:

Semantic search

Hybrid BM25 + vector search across all stored memories

Contradiction detection

Cross-encoder model identifies when new facts conflict with existing ones

Intelligent forgetting

Confidence decay with full audit trail, not opaque deletion

Multi-agent coordination

File claims, task queues, inter-agent messaging, session management

Entity-scoped memory

Per-project isolation so agents don't cross-contaminate context

Checkpoint/resume

Save and restore agent state across sessions for long-running tasks

Audit trail

Every store, update, and deletion is logged with timestamps and sources

Vendor independence

Works with Claude, GPT, Gemini, local models. No LLM lock-in.

These are not nice-to-haves. Contradiction detection prevents your agent from acting on outdated information. Intelligent forgetting keeps the memory store useful as it grows. Multi-agent coordination prevents two agents from modifying the same file simultaneously. Audit trails let you debug why your agent made a particular decision.

OpenAI's architecture makes these fundamentally difficult to add. When memory is summaries injected into a prompt, there is no structured store to search, no timestamps to track, no relationships to traverse.

A Note on Security

ChatGPT's memory has been the subject of serious security research. Researchers demonstrated “ZombieAgent” exploits where malicious instructions injected into ChatGPT's memory via CSRF attacks persist across all devices and sessions. Once memory is tainted, every response can leak data.

This is a fundamental risk of cloud-hosted memory. If your memories live on someone else's servers and are automatically populated from browsed content, the attack surface is large.

OMEGA's local-first architecture sidesteps this entirely. Your memories live in a SQLite file on your machine. Nothing is sent to any server. There is no network-based attack surface for memory injection.

Honest Tradeoffs

OpenAI is a $300B company with thousands of engineers. OMEGA is a solo project. Pretending they don't have advantages would be dishonest:

OpenAI is better if you need...

- ✓Consumer-facing product with zero configuration

- ✓Memory for ChatGPT specifically (it already has it)

- ✓Massive scale without managing infrastructure

- ✓Tight integration with the OpenAI ecosystem (GPTs, plugins, Assistants API)

OMEGA is better if you need...

- ✓Persistent memory for your own AI agents (not ChatGPT)

- ✓Local-first privacy where data never leaves your machine

- ✓Benchmark-proven accuracy (95.4% LongMemEval, #1 overall)

- ✓Semantic search, contradiction detection, and intelligent forgetting

- ✓Multi-agent coordination (file claims, task queues, messaging)

- ✓Vendor independence across any LLM provider

The Bottom Line

OpenAI built memory for ChatGPT. It works well for what it is: a consumer product that feels personalized. The April 2025 update made it significantly better. The automatic memory management is genuinely impressive engineering.

But OpenAI did not build memory for developers. The Conversations API provides conversation-level state. The Agents SDK provides a framework. Neither provides the thing developers actually need: a persistent, searchable, intelligent memory system that works across sessions, across agents, and across LLM providers.

That is what OMEGA is. A single SQLite file, bundled embeddings, zero API keys, and the highest accuracy on the standard benchmark. If you're building AI agents that need to remember, it takes 30 seconds:

- Jason Sosa, builder of OMEGA

Related reading

Two commands. Zero cloud. Persistent memory for any AI agent.